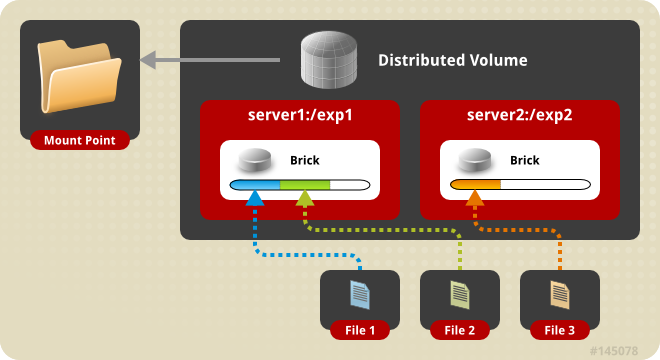

In one of my earlier posts on GlusterFS, we went through the steps on how to setup a Distributed Storage Volume, where the end result was to have scalable storage, where size was the requirement.

What will we be doing today with GlusterFS?

Today, we will be going through the steps on how to setup a Replicated Storage Volume with GlusterFS, where we will have 3 GlusterFS Nodes, and using the replication factor of 3.

Replication Factor of 3:

In other words, having 3 copies of our data and in our case, since we will have 3 nodes in our cluster, a copy of our data will reside on each node.

What about Split-Brain:

In Clustering, we get the term Split-Brain, where a node dies or leaves the cluster, the cluster reforms itself with the available nodes and then during this reformation, instead of the remaining nodes staying with the same cluster, 2 subset of cluster are created, and they are not aware of each other, which causes data corruption, here’s a great resource on Split-Brain

To prevent Split-Brain in GlusterFS, we can setup a Arbiter Volume. In a Replica Count of 3 and Arbiter count of 1: 2 Nodes will hold the replicated data, and the 1 Node which will be the Arbiter node, will only host the file/directory names and metadata but not any data. I will write up an article on this in the future.

Getting Started:

Let’s get started on setting up a 3 Node Replicated GlusterFS. Each node will have an additional drive that is 50GB in size, which will be part of our GlusterFS Replicated Volume. I will also be using Ubuntu 16.04 as my linux distro.

Preparing DNS Resolution:

I will install GlusterFS on each node, and in my setup I have the following DNS entries:

- gfs01 (10.0.0.2)

- gfs02 (10.0.0.3)

- gfs03 (10.0.0.4)

Preparing our Secondary Drives:

I will be formatting my drives with XFS. Listing our block volumes:

1 2 3 4 | |

Creating the FileSystem with XFS, which we will be running on each node:

1

| |

Then creating the directories where our bricks will reside, and also add an entry to our /etc/fstab so that our disk gets mounted when the operating system boots:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 | |

After this has been done, we should see that the disks are mounted, for example on node: gfs01:

1 2 3 4 | |

Installing GlusterFS on Each Node:

Installing GlusterFS, repeat this on all 3 Nodes:

1 2 3 | |

In order to add the nodes to the trusted storage pool, we will have to add them by using gluster peer probe. Make sure that you can resolve the hostnames to the designated IP Addresses, and that traffic is allowed.

1 2 3 | |

Now that we have added our nodes to our trusted storage pool, lets verify that by listing our pool:

1 2 3 4 5 | |

Great! All looks good.

Create the Replicated GlusterFS Volume:

Let’s create our Replicated GlusterFS Volume, named gfs:

1 2 3 4 5 6 7 | |

Now that our volume is created, lets list it to verify that it is created:

1 2 | |

Now, start the volume:

1 2 | |

View the status of our volume:

1 2 3 4 5 6 7 | |

Next, view the volume inforation:

1 2 3 4 5 6 7 8 9 10 11 12 | |

Security:

From a GlusterFS level, it will allow clients to connect by default. To authorize these 3 nodes to connect to the GlusterFS Volume:

1

| |

Then if you would like to remove this rule:

1

| |

Mount the GlusterFS Volume to the Host:

Mount the GlusterFS Volume to each node, so we will have to mount it to each node, and also append it to our /etc/fstab file so that it mounts on boot:

1 2 | |

Verify the Mounted Volume:

Check the mounted disks, and you will find that the Replicated GlusterFS Volume is mounted on our /mnt partition.

1 2 3 4 5 | |

You will note that GlusterFS Volume has a total size of 47GB usable space, which is the same size as one of our disks, but that is because we have a replicated volume with a replication factor of 3: (47 * 3 / 3)

Now we have a Storage Volume which has 3 Replicas, one copy on each node, which allows us Data Durability on our Storage.